Real-time Semantic 6D Pose Estimation

Autoselects semantic masks and launches FoundationPose in real-time

Skills

3D Computer Vision, Vision Foundation Models, Real-time deployment, RGB-D, Camera Calibration

Frameworks: PyTorch, OpenCV, Open3D, TensorRT, ONNX

Summary

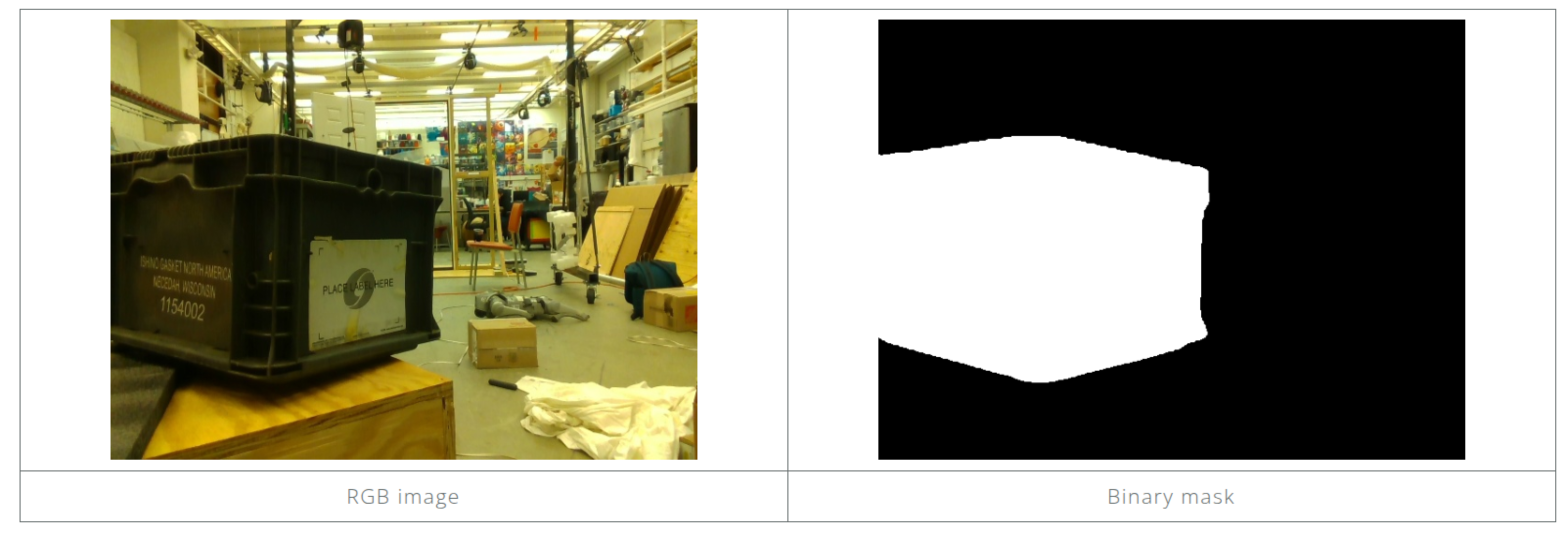

Deployed a 6D Pose Estimation system in real-time utilizing RGB-D from a Realsense D435i camera. The first frame is used to generate CLIP-guided mask generation process with FastSAM. Then NVIDIA’s FoundationPose model consumes the mask as semantic guidance, and provides single-shot 6D pose predictions using a pre-existing mesh of the target object; in this case, a tote or Xbox controller. The mesh was generated using a LiDAR-enabled iPhone through an AR application and was subsequently refined manually in Blender to ensure accuracy.